- Cerebral Valley

- Posts

- CopilotKit is Defining How Agents and Humans Work Together 🤝

CopilotKit is Defining How Agents and Humans Work Together 🤝

Plus: CEO Atai Barkai on AG-UI adoption by Microsoft, Google and Amazon, why the copilot pattern is holding strong, and learning from human-agent interactions...

CV Deep Dive

Today, we're talking with Atai Barkai, co-founder and CEO of CopilotKit.

CopilotKit is developer infrastructure for building full-stack agentic applications - specifically the layer that connects your agentic backend to your user-facing application. They're now the category leader in this space by a growing margin, serving millions of weekly agent-user interactions across everything from Fortune 100s to unicorn startups.

CopilotKit is also the company behind AG-UI (the Agent-User Interaction protocol), which has been adopted by essentially every major player in the agentic stack - Microsoft, Google, Amazon, Oracle, LangChain, CrewAI, Pydantic, and more. They're at 40k+ GitHub stars, over two million weekly installs, and 10%+ of the Fortune 500 running CopilotKit in production. We last spoke with Atai in late 2024, right before their AI Tinkerers hackathon. Back then they had 12k GitHub stars and were just launching CoAgents. A lot has changed.

In this conversation, Atai (joined briefly by co-founder Uli) explains where AG-UI fits in the protocol landscape, why the copilot pattern is still holding strong even as agents get more autonomous, and shares a fascinating customer story about what happens when you stop trying to fully automate a workforce and start building alongside them instead.

Let's dive in ⚡️

Read time: 9 mins

Our Chat with Atai 💬

It's been over a year since we last spoke. Give us the quick overview - how would you describe CopilotKit today?

We provide developer infrastructure for building full-stack agents, specifically the user interactivity layer - the connectivity between the agentic world and the user-facing application world. At this point we're also the category leader in this category by a growing margin. We serve millions of weekly agent-user interactions, all the way from Fortune 100s to unicorn startups to smaller startups, everybody in between.

We're also the company behind the AG-UI protocol, which stands for Agent-User Interaction protocol. This initially emerged from a partnership with first LangGraph and then CrewAI. It's since been adopted by essentially everybody else on the startup side including Mastra, Pydantic, Agno, AG2 and Llama Index - probably missing some there. Then the last few months the hyperscalers as well, like Microsoft, Google, Amazon, and Oracle have all integrated AG-UI into their respective agent frameworks.

The base story is best-in-class agentic front-end infrastructure that integrates with anybody's stack. We're a horizontal layer very intentionally.

For developers who know MCP but haven't encountered AG-UI yet - what's the distinction?

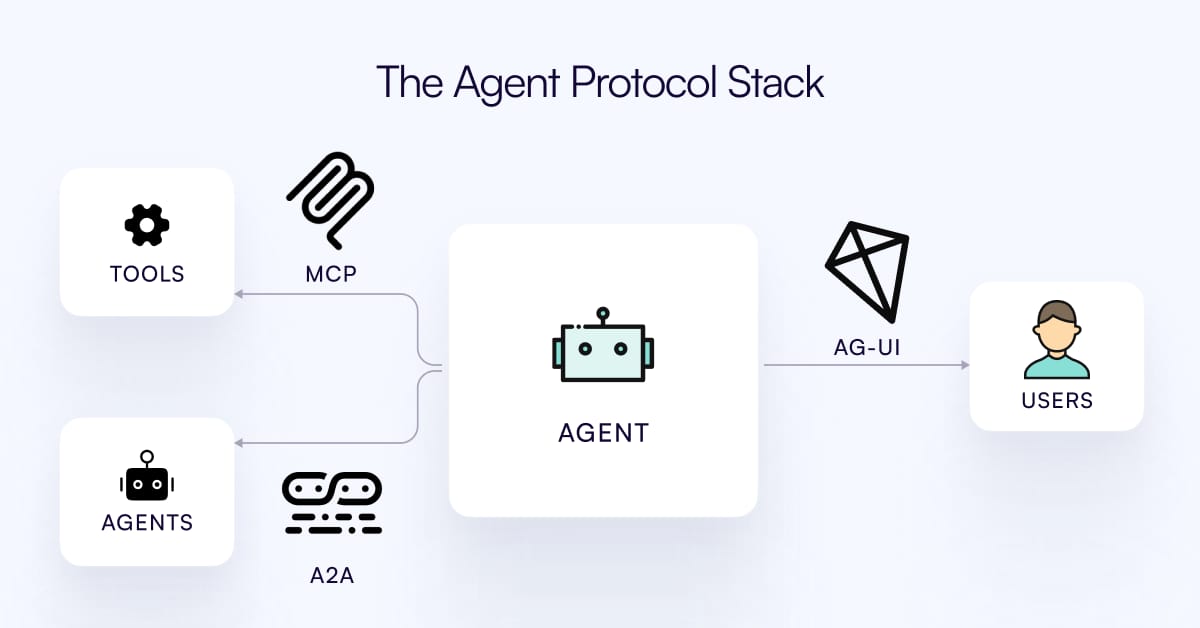

Think of it as a triangle of agent interactions. You have MCP, which connects agents to third-party tools and context. You have A2A, which connects your agentic system to other agentic systems. AG-UI is essentially the third leg of this triangle - it connects your agentic backend to your user-facing application.

What's not on this graph is newer developments, but we actually have handshakes between AG-UI and both of these players now. On the MCP side, there's what's called MCP Apps that just launched - that's kind of the app store for agents. It's not your own agent, it's ChatGPT or Claude, these super-agents. But now you can bring your own applets and widgets into those. The handshake we have there is to bring any MCP app that's designed to work on ChatGPT or Claude into your own applications. That's our focus - your own full-stack agentic application.

On the A2A side, we collaborate with Google on what's called A2UI, which is a declarative generative UI spec. We were launch partners on that and continue to own a big part of it going forward.

Last time we talked, you had this framing around an 'economic Turing Test' - the idea that copilots were doing 80-95% of the work with humans still in control as the CEO. Has that thesis evolved?

I think we're actually starting to see AI in the GDP numbers. We're seeing growth in the west that we haven't seen in ever maybe, or at least since the Industrial Revolution. We just see GDP growth rate numbers that usually you only see when you're coming out of a recession - something like 4.5% - just from baseline. It's not only the CapEx spending, but also productivity per worker. You can see AI's impact - it's making people a lot more productive.

How this connects to the protocol landscape is there's a really big refactoring of the world around this new technology. Everyone sees a need for solutions that are not so product-centric, because the whole world is refactoring and you can't just connect to somebody's special API that might shift completely tomorrow. There's a really strong force toward standardization.

The fundamental technological problem we're solving is that agentic systems essentially break the request-response paradigm that's been powering the Internet for the last 30 years. They're long-running. They have to stream their work as they run. They have to support structured data exchange - tool calls, data updates - at the same time as unstructured voice and text. If you try to just put an agent behind a standard REST API, you run into problem after problem because the glue of the request-response era doesn't fit the agentic era.

So the copilot pattern is still holding even as agents get more autonomous?

Yeah, it's interesting - maybe not surprising, but interesting to see it play out. The overall productivity is really increasing, but programmers are not getting fired en masse. The copilot pattern is really strong. Don't ask for perfection. You can get increasingly autonomous, increasingly intelligent, but if you ask for full autonomy, it just doesn't work. But now human interaction can be at a higher and higher level.

The second part is how these systems get so good. There's the concept of deep agents - the bitter lesson applied to LLMs, just let the model run. But you also have learning from interactivity. The reinforcement layer is really proving itself. Some things you can get reinforcement data from automatically like machinery and file rewards. But what we're seeing the leaders doing - and OpenAI just released a really interesting writeup about their internal data scientist agent - the leaders are all learning from the experts using these systems every single day.

We're seeing this in the coding world. We're betting this is going to come everywhere, and that's a big part of our focus - making that a reality.

There's a lot of hype in this space. You're on the front lines seeing what's actually working. Do you think the hype and reality are starting to match?

Yeah, there's no question. Even just as a company, and beyond just as a company, the power of these tools has become undeniable at this point, certainly in the coding world. The question is how did we get there?

Uli Barkai (co-founder): I'd add as well that we're particularly bottoms-up in terms of what we're building. In some places in the space you come from the top and imagine how things should be and give that as a prescription. Being in the application layer keeps us very practically oriented. The tools we're providing are solving real problems. We didn't sit in a room and think through what people need - everything evolves from the bottom up.

We have around 15 million weekly interactions between agents and humans going through our systems. A human is in that loop - it's not just agents running and you don't know if it's useful. It's interacting with a person, which is a kind of constant reality test.

Let's talk about traction. Last time you had 12k GitHub stars. What are the numbers looking like now?

We're at around 42,000 GitHub stars now - that's AG-UI and CopilotKit together. Installs are around two million weekly across Python and TypeScript. On the Python side it's north of a million a week, TypeScript is nearing half a million.

Over 10% of Fortune 500 is using us in production. We have even more nearing production, but in production, over 10% in the open-source piece of it. We're leaning very heavily enterprise, and that's by design choice. That's our niche in the ecosystem. We're optimizing every part of our stack for enterprise needs, and a lot of times it's very different from how you'd optimize for startup needs.

Any customer stories that surprised you or illustrated something interesting about where this is all going?

This one's a good one. A Fortune 100 has a very large team doing some role - hundreds of employees. One of the teams we work with there initially tried to fully automate that function. They got pretty excited a few weeks in, thought they were 70% of the way there. Then they worked for a year and they were at 74%. They just ran into the autonomy wall like everybody else.

Now instead of that, they built a copilot for that workforce. They already deployed to production, and it's being used with the entire workforce.

Now, they have a fleet of this workforce interacting with this agentic system every single day, essentially correcting it. But the agent isn't able to learn over time on its own, and it keeps making the same mistakes. They want it to get better, and the only place in the world where this agent can learn to improve is from those experts who work alongside it - there's no other place to get this data.

We're actually working with them on a system to learn from these interactions, and they've already started to deploy it. We have a paid pilot on that. That's kind of our next focus as a company.

How does that learning system work?

The first wave is based on prompt augmentation, which some call in-context RL. Every single interaction carries a lot of data. First, just the fact that it happened tells you it wasn't perfect. Then, how did it get nudged? Whether it's a manual edit or re-nudging the agent, there's a whole journey from the initial prompt to wherever you end up. Every single piece of it has a lot of information.

The first part is taking all this information and augmenting the prompt. It's very easy for customers to integrate. It essentially looks like swapping the LLM call, and it's also easy for us to iterate on because there's no fine-tuning loop.

After we perfect that, the second step is fine-tuning integrations. We're excited to partner with companies like Fireworks, Modal, Weights & Biases, and actually all the model companies have their own fine-tuning APIs. That's the second wave.

Let's talk technical for a minute. You launched V1.50 at the end of December. What changed?

V1.50 is essentially a non-breaking 2.0. Everything in V1 continues to work, but we have a whole new set of both internals and developer interfaces, and visual interfaces as well. They're built for this non-request-response world which is rooted in the agentic pattern.

AG-UI itself is evolving to be what we're calling a super-protocol, which means it has native support for every single feature of MCP and A2A because there's a lot of overlap - all these are event-based protocols. That's the abstraction for agentic systems.

We introduced this new hook called Use Agent. We think of it as the full best-in-class connection between essentially any agentic system on the backend - because almost every agentic system is AG-UI compatible now - and your frontend. You can use it for pre-built experiences, but also full customization. You can hook into the direct raw connection and build customer experiences that nobody else thought about.

What's the hardest technical challenge you're still working on?

Being a horizontal layer is inherently technically challenging because you have to work across a stack. But one thing I want to mention - our enterprise product is stateful. There's connections to databases. But it's designed from the ground up to be self-hostable in any enterprise environment.

That inherently makes it much more challenging than just building on top of our own managed cloud solution. We can't use off-the-shelf S3 or whatever abstraction might be. You have to build engine from the ground up. But we see really strong market demand for this - folks can deploy it on their own VPCs, even on-prem.

The RL thing is also one of the most interesting technical problems to solve - and it's extremely useful. We've essentially by our place in the stack earned the right to play in this really interesting problem that's fundamentally... I would say humanity almost has to solve. How do we have this technology - LLMs - that's not as smart as humans in some regards, but it's extremely useful, it's getting embedded anyway. How can these systems learn from humans as best as possible?

That's touching fundamental epistemology but in an applied way.

Yeah, exactly. I did research into intelligence in my past - what parts of it are harder than others to digitize. I think we're actually running against this from a very applied direction now. There's something this current technology can't yet automate even though it can do a lot. Defining this interface, allowing these two things to work together - and then maybe extracting some knowledge that right now only humans can do, but turning it into a form that can be repeated. Invent the first time you do something, then you can teach these systems to kind of repeat it.

How big is the team now, and what are you looking for in new hires?

We're at 22 people right now and planning to double in the next year.

In a word - point and shoot. We want to give you a really difficult problem that has a lot of moving pieces and not tell you exactly how to do it or who you should talk to. Imagine you're a mini-founder and just go figure it out. That's what we really value.

We're hiring for Engineering, Forward Deployed Engineering, and a Partnerships and Community Lead role based in SF. The partnership role is literally working with what you could easily argue are the most important teams at the most important companies in the world right now. We're talking to them every week.

If you want to be a core part of powering how the ecosystem evolves in this part of the stack - how UI is evolving, how agent applications are evolving - this is the place to join.

What's on the 2026 roadmap?

On the enterprise side, the self-servable, self-hostable product and beginning the RL piece. On the open-source side, continuing to be best-in-class - adding features as they evolve.

Something we're very excited about is voice - that's going to have its moment in 2026. Mobile too - right now we're web-centric, so that's an expansion to nearby territory. The whole concept of deep agents, finding the best ways for these systems to interact with people - a lot of that seems to be parallelization. There's no shortage of problems on the horizon.

Anything else you want people to know?

We have our Luma calendar where we run events in the city - the most interesting events in SF when it comes to generative UI and full-stack agents. Keep an eye on that.

Our thesis is basically every single interaction between humans and technology in the next few years is going to become agentic. It's not like we're all going to sit on the grass all day - we're going to interact with technology a lot, and every one of those interactions is going to be with an agentic system. That human-agent interface layer is going to become really immediate for all of us, all day long.

Read our past few Deep Dives below:

If you would like us to ‘Deep Dive’ a founder, team or product launch, please reply to this email ([email protected]) or DM us on Twitter or LinkedIn.